Five Popular Lies You Hear About Consciousness

Neuroscience is both more and less advanced than it’s rumored to be …

Aside from the eternal mystery of why reality exists at all rather than not, our own conscious awareness of it is likely the most baffling puzzle humans face.

Our discoveries since the dawn of neuroscience in the mid-1800s are genuinely astounding, and often profoundly counter-intuitive. But a comprehensive answer to the fundamental question of how the brain produces our mental worlds is as elusive as it’s ever been.

Sadly, popular writing on consciousness is often riddled with errors, at times making grandiose claims with no basis in fact, or rehashing philosophical debates left behind by science decades ago, or yoking neuroscience to spiritualist agendas to which it has no real connection. In an effort to clear the air at least somewhat, here are five of the most common falsehoods I encounter about consciousness in popular discussion.

#1: Consciousness is an emergent property of neural networks

There are genuine fears out there that, some day, the internet will “wake up” and become a conscious entity. At the core of this paranoia is a misunderstanding about “emergence”, the notion that systems can do things which their components cannot.

Somewhere along the line, an idea became popularized that consciousness is an “emergent property” of the brain, and by extension any neural system or “informational” network. According to this thinking, consciousness “emerges” when a networked information-processing system reaches some critical nodal mass or degree of complexity.

If we want to be persnickety about it, consciousness isn’t an emergent property at all, or even a property of any kind. Narrowly speaking, properties are fundamental to substances. The wave action of water, for example, and its surface tension are not present in water molecules. They are true emergent properties, consistently arising when large numbers of H2O molecules form bodies of water.

But in common parlance, emergent behaviors are typically covered by the “emergent properties” umbrella. For example, the functioning of an ant hill or bee hive isn’t dictated by the queen, or directed by some governing body telling everyone what to do. It arises from the collective behavior of the insects themselves, who are not even aware that they form a colony. These emergent functions are crafted by the blind progress of evolution. And as we can see, each species does its own thing. There’s no universal force which kicks in uniformly at some critical mass of insect bodies.

Consciousness is only “emergent” in that sense, as an evolved behavior, not a true property. It cannot be found in any neuron — much less in the quantum behavior of the subatomic particles forming the neuron — but only in the broader functioning of specific subsystems in the brain as an organ. Like other bodily functions, such as walking or flying, it’s crafted by a species’ particular evolutionary path. It doesn’t just happen if you connect a large number of cells together, nor large numbers of circuits or computers.

Computers, robots, or the internet will not accidentally become conscious. If we want conscious machines of any kind, we will have to design and build them that way, just as we have to design and build them to do everything else they do. And at the moment, we haven’t the faintest idea how to make that happen.

Is it possible that someday we’ll develop routines that “evolve” conscious machines? Maybe. But right now anyway, nobody has any clue how to do that either.

#2: Consciousness is so mysterious, neuroscientists can’t even define it

This claim is true in one sense, but the way that it’s typically stated is utterly false. That’s because there are two very different ways we can define things — pointing at them, so to speak, or explaining them.

For example, let’s say I’m out on my porch one evening watching the stars, and I go inside and tell my wife, “There’s this weird thing out there.” She asks, “What is it?” and I say, “It’s a big glowing green thing with an orange halo that rose up just above the trees to the south.” She goes outside with me and says, “Wow! What do you think it is?” and I say, “I have no idea.”

So I’ve been asked two times “What is it?” and I’ve given two very different answers. The first time, I referred to what I saw — it’s that thing over there that looks like this. The second time I was asked to explain what it was made of or how it operated, which I couldn’t do. I could refer to it, but not explain it.

Back in ancient times, nobody knew what the sun was in the second explanatory sense, because nobody knew how it worked in the way we do today (although they made up their own myths to fill the gap). But everyone knew what it was in the first referential sense — it was that bright disk that traveled across the sky every day and was covered by the moon in an eclipse. Nobody would ever confuse it with the moon, or the stars, or clouds, or birds.

Neuroscientists still don’t understand consciousness in the second sense. They can’t say exactly how the brain creates consciousness, or why our conscious experience is the way it is and not somehow different. But that doesn’t mean they’re confused about what they’re studying, which is what is usually implied by the “no definition” claims that are all too common on the internet. Here is a perfectly useful, plain-English definition of consciousness by Marcello Massimini:

Everyone knows what consciousness is: It is what vanishes when we fall into dreamless sleep or general anesthesia and reappears when we wake up or when we dream — in other words, it is synonymous with experience. (“Toward an Objective Index of Consciousness” 2014)

Not only that, but neuroscientists have catalogs, so to speak, of various conscious percepts (often called qualia in philosophical discussions) such as colors, sounds, scents, flavors, awareness of the body, various feelings of pain, and so forth, as well as volumes of research on what the brain is doing when these are produced so that it’s possible to know, to some extent, which percepts are being experienced just by observing neural activity. Not only that, but it’s now often possible to identify, by observing the brain, if a non-communicative patient is or is not consciously aware, despite their physical paralysis.

In short, neuroscientists doing research on consciousness are not at all confused or in disagreement about what it is they are studying and trying to explain. And in fact we do have much more technical definitions than the one above by Massimino, which is directed at a general audience. Just because we haven’t yet figured out a lot of what’s going on, or why our conscious percepts have to be what they are, doesn’t mean nobody knows what the heck the word “consciousness” refers to.

#3: Trees and plants are conscious because they communicate

This is one of the more bizarre claims about consciousness, but I seem to run across it more frequently with every passing year. Perhaps the most common basis for this claim is the way trees and other plants will “warn” each other of the approach of threats like fires or predators.

Which they absolutely do. For example, if a stand of plants of a particular species is set upon by voracious insects, they might respond by releasing a chemical that’s carried by the air to their neighbors, triggering them to secrete another chemical which the predators dislike, along with the signal chemical causing the “message” to be spread down the line. With any luck, the invaders might change direction and prey upon some less chatty and more poorly armed species.

And that’s a darn impressive feat of evolution, but it doesn’t require or involve consciousness. The plants don’t have any conscious awareness of the insects, much less think, “Gee, I’d better warn the neighbors!” It’s a purely mechanical process.

Although much more complex, it’s just as mechanistic as the way pendulum clocks will sync up if placed near one another in a shop. Grandfather clocks are famous for this. No matter how their pendulums are timed when they’re first placed on the floor of the clock shop, a pair of grandfather clocks will eventually swing their pendulums in unison. Not because they think that’s a cool trick, but because vibrations carried through the floor cause tiny disturbances in the swing patterns which only stop when the two clocks are operating in synchrony.

It’s also very common for folks to confuse artificial intelligence (AI) with artificial consciousness, but consciousness and intelligence are not the same thing. It’s entirely possible to have one without the other. Intelligence is a selective and adaptive response to the environment, the ability to learn how to do things differently in different circumstances. Consciousness is a subjective internal world, such as the hologram-like experience which arises in human minds when we wake up in the morning, or start to dream at night, but which is never produced by rocks or clouds.

A person under the influence of very powerful psychoactive drugs, for example, could possibly be conscious while exhibiting no intelligent behavior, lost in a hallucinogenic stupor. On the other hand, we know from experiments that we can and do learn without the involvement of conscious awareness. In fact, you haven’t really learned to do anything masterfully until you don’t have to be consciously aware of how it’s done anymore. I have long since forgotten how I tie my shoes, or drive my truck. And if you try to pay attention to exactly how you’re throwing a Frisbee or hitting a golf ball or playing a guitar, you’ll “choke” and make mistakes.

Experimenters have used subliminal exposures to prove that we learn non-consciously. They do it, for example, with a memory game in which subjects have to recall which images in a set of slides are paired. They’re told that if they don’t remember seeing some slides, just guess. Unbeknownst to the subjects, a few images are shown for durations of time below the conscious threshold, too briefly for the brain to render them as conscious percepts, even though they trigger activity in areas of the brain not involved in conscious experience. With enough exposure, subjects will “guess” the matched pairs correctly for the slides they never consciously saw at all, at a rate significantly better than random chance. Their brains learned, without bothering to make the subjects aware of it.

There’s also the phenomenon of blindsight, a condition caused by damage to areas of the brain that add visual elements to consciousness, in people with undamaged eyes and optic nerves and otherwise intact visual cortex. Such people can, to their own surprise, correctly “guess” the location of a light, or even follow a path, again at a rate significantly better than mere chance. Non-conscious processes in their brains are doing visual work, even if they have no way to knowingly experience it.

Bottom line, just as the internet will not gain consciousness by sheer force of network volume, artificial intelligence will also not gain consciousness by getting “smarter”. The ability to learn or adapt or communicate is simply not the same thing as conscious awareness.

#4: Consciousness determines physical reality at the quantum level

The world of the very small often behaves in ways that seem profoundly weird. One such weirdness is wave-particle duality, the observation that tiny stuff like photons and electrons can act like waves or particles, depending on what sort of experiments we decide to conduct. Or in other words, how we choose to observe them.

One set of experiments in particular has given rise to the myth that conscious choices in how we view the world determine how the fundamental pieces of physical reality behave, that how we consciously “observe” the world changes what the world actually is. But that’s not how it works.

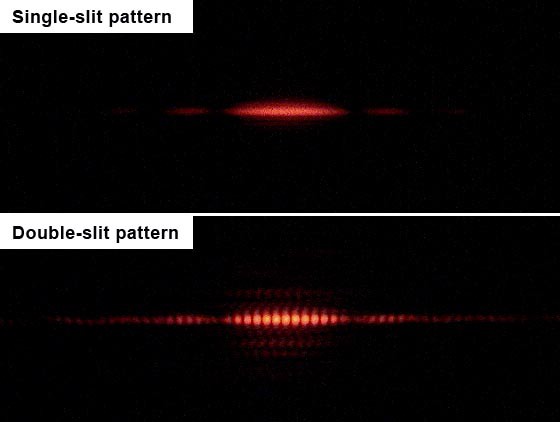

At the heart of this confusion is the famous “two-slit” experiment, which involves firing photons of light toward a barrier with two gaps in it, some of which bounce off the barrier and some of which pass through the gaps. If we put a screen on the other side of the barrier on which each photon makes a mark upon contact, something surprising happens. Instead of seeing two clusters of marks, one cluster for each group of photons that pass through the two gaps, we get several clusters of hits separated by dead-zones. Like this:

This is what happens when waves interact, called an interference pattern. Think of two pebbles dropped into a pool of water, each sending out rings of ripples. When those rings intersect, the peaks combine to form taller peaks, the troughs combine to form deeper troughs, and where peaks and troughs meet they cancel each other out to form flat spaces.

If we close one gap in the barrier, the banded pattern no longer forms on the screen. The stream of photons behaves like a stream of bullets, like particles. Open it up again, and the pattern once more takes shape:

Despite the photons being fired at the barrier one at a time, each striking the screen at one point, they still show wave-like behavior between the source and the screen when both gaps are open. It’s as if each photon went through both slits as a wave and interfered with itself, then decided to behave like a particle again when it hit the screen.

Placing detectors at the gaps to find out which one each photon goes through on its way to the screen, sadly, doesn’t work because the energy involved in detecting the photon disturbs it and screws up the experiment. But physicists found a way around this problem. They placed crystals at the gaps which split each photon in two, each “twin” having half the energy of the original. One of the twins was sent toward the screen undisturbed, and the other toward a pair of detectors that distinguished which gap it passed through. Oddly enough, when they did that, the interference pattern vanished.

Even weirder, this was still true if the detector-bound photons didn’t reach the detectors until after their twins had already struck the screen. But it gets more bizarre still. Experimenters placed deflectors in the path of the detector-bound twins so that roughly half went to the detectors while the other half were effectively blocked. When the screen patterns of the two sets were teased apart, the twins of the photons that reached the detectors did not form interference patterns on the screen, while the twins of the undetected photons did. In other words, if the experiment could reveal to the scientists which gap the detector-bound photon had gone through, its screen-bound twin behaved like a particle, as though it had gone through one gap and not the other. But if the experiment hid that information from the scientists, so that they couldn’t know which gap the detector-bound photon had passed through, then its twin behaved like a wave that passed through both gaps — even though the revealing or hiding occurred after the twin struck the screen! It was as though the researchers’ awareness of one set of events determined the fate of other events which had already occurred in the past.

Now here’s where the consciousness myth comes in. It’s possible to construct the experiment in such a way that the researcher is in control of whether or not the detector-bound particles will or won’t be subjected to blocking, making the decision after their twins have hit the screen. In effect, the person running the experiment makes a choice, after the photon has or has not conformed with an interference pattern, which configuration the twin will go through. Some folks have interpreted this to mean that the experimenter’s conscious decision whether to view the photon as a wave or a particle determines whether it actually is a wave or a particle. Conscious choice and observation therefore determine reality at the most basic physical level.

There are a few problems with this interpretation. First, “observation” in such experiments doesn’t have to involve any conscious beings at all. They work with purely mechanical “observers”. An “observation” in quantum mechanics is simply the interaction of quantum events with “classical” systems, things made up of lots of atoms like screens and detectors. In a very real sense, the human “observers” are actually observing the mechanical “observations”.

Second, quantum systems simply don’t follow the same rules as classical systems. No one knows exactly why not, but they don’t. So our naive, intuitive interpretation of what happens in quantum experiments is likely to be dead wrong. And in this case, no one is choosing whether a photon is a particle or a wave, because wave-particle duality is an inherent property of photons. They are not either one or the other. Which way they appear to us depends upon how we choose to look at them. But that choice doesn’t change what they are.

#5: The nature of consciousness is a question for philosophy or computer science

Consciousness is a bodily function. The field which adds to our understanding of it is neuroscience. Computers are necessary tools for studying anything as complex and as fast as a mammal brain. But when it comes to being conscious, no computer around today does anything at all like what our brains do.

Of course, there are some things our brains do which computers mimic quite well, such as directing mechanical arms to assemble products or to paint car chassis. But generating subjective experience, however it’s done, is a qualitatively different task, one that we don’t (yet) know how to replicate.

Consciousness is not a dataset. It is not the result of some mathematical or symbolic calculation. It’s a feat physically performed by our brain tissue, just as walking and digestion and breathing are physically performed by body tissues. We know this by observing what happens when certain parts of the brain — those that make consciousness occur — are damaged or missing, as well as when they’re working normally, and even when we intentionally alter what they do. In the 21st century, there’s no doubt that brains perform consciousness.

And even if we were to enter all the information about every cell in a human body into a computer, so that it could run a perfect simulation of that body, the computer itself would not become capable of walking or breathing or digesting food or being consciously aware. By the same token, computers capable of running simulations of weather patterns do not thereby gain wind speeds or alter the humidity inside their cases.

In short, we cannot observe what computers do and deduce how brains generate conscious awareness. We have to observe brains.

And while it’s true enough to say there’s a certain amount of philosophy underlying all science, philosophy itself isn’t what we turn to when we want to understand how our bodies work. For that, we need the biological sciences.

Anyone trying to understand consciousness through philosophical literature is very likely to end up pondering conundrums which can themselves only be answered by neuroscience anyway. I still see people discussing “Mary’s room”, for instance, as though it were a thought experiment regarding consciousness, when it more properly concerns our concept of knowledge.

The original “Mary’s room” scenario proposed a researcher studying color in a black and white environment, but I think it’s much easier to imagine such a person studying the sense of smell while not actually being allowed to smell the compounds being studied, as suggested by C. D. Broad.

Imagine Mary is researching how the brain generates the odors of cinnamon and cloves. She knows everything there is to know about the physical structures of cinnamon and cloves, about the organic molecules which trigger the conscious experience of their signature scents, and about what the human brain is doing from the moment those compounds hit the nose to when the experience of smelling them fades and ends. But she has never smelled either one herself.

If someone brings the spices into the lab and lets her sniff them, does she learn something? Put another way, could she tell which was which blindfolded?

The answer is that she would not be able to tell them apart just by their odors, so she would learn something new by experiencing their scents. This is the essence of the so-called hard problem of consciousness. Knowing what the brain is doing during a conscious percept cannot tell us, by itself, what that percept is. We can only link the two — the conscious experience and the neural behavior which causes it — by observing the brain when we already know which percept is being experienced. The correlation appears arbitrary.

Put another way, we can only come to understand and recognize a specific percept by having it ourselves. Broadly speaking, we could use knowledge of the body to distinguish some significantly differing percepts from one another — for example, the experience of smelling a Scotch bonnet pepper versus a lilac due to the former triggering pain — but the only way to learn to recognize a percept, to tell it apart from similar ones, is for your own brain to produce it.

Neuroscience also tells us that the capacity to produce the basic components of percepts (or qualia, or conscious experiences) is built into our brains, not learned from the world. We see the colors we see because we have brains that generate those colors. Sharks and birds perceive magnetic fields in ways that we don’t, but we will never know how magnetic fields seem to them because we can’t get our own brains to do that trick, no matter how much we know about magnetism or animal brains.

One rare case illustrating this point was discovered by neuroscientist V. S. Ramachandran, who studied a colorblind subject with color-based synesthesia, which is caused by the color-generating area of the brain being cross-stimulated by another area. If our color-palettes are entirely in our brains, this subject should experience more colors in his synesthetic experiences than in his typical visual perception of objects. And sure enough, he did.

At the end of the day, while philosophy and computer science may be employed in the service of neurobiology, using either one in the absence of the latter to draw conclusions about consciousness inevitably leads down paths of badly informed speculation which can only be supported or debunked by neuroscientific research. For now, at least, consciousness is biology. Someday we may be able to build conscious machines, but not until we figure out how our own brains accomplish the feat.

So what’s it all mean?

OK, so consciousness doesn’t just arise spontaneously from neural networks. It’s not so mysterious that we can’t define it. It’s not the same thing as intelligence. It doesn’t determine physical reality. And it’s a biological function. So what?

Well, first of all, we can be a little less paranoid about the robot uprising. And we should be a bit more wary of projecting conscious awareness onto things that don’t have any reasonable mechanism to produce it — whether that’s a tree, a worm, or the entire universe.

There’s an old joke that if triangles had a god, it would have three sides. Is it any wonder that humans tend to long for a cosmic consciousness? Or for our own to survive when the body’s gone?

But we are more than our conscious awareness. We are our entire bodies and brains, capable of perceiving the distant stars, recalling our past, and planning for the future. And that in itself is mysterious and wondrous enough, without having to imagine a conscious universe or conscious machines or conscious plants.

There’s plenty to be amazed at all around us, including our own ability to be amazed.

Header image by aytuguluturk (adapted)

Read every story from Paul Thomas Zenki (and thousands of other writers on Medium).

Paul Thomas Zenki is an essayist, ghostwriter, copywriter, marketer, songwriter, and consultant living in Athens, GA.